Choosing the best SAST tool in 2026 means balancing detection accuracy, developer experience, AI capabilities, and integration with your existing workflow. Static Application Security Testing (SAST) analyzes source code at rest to catch vulnerabilities before they reach production, and the landscape has shifted significantly as vendors add AI-powered triage, remediation, and even agentic fix workflows.

This guide compares 10 leading SAST tools head to head so you can make an informed decision based on your team’s priorities - whether that’s reducing false positives, accelerating remediation, or catching business-logic flaws that pattern-based scanners miss.

Quick comparison: best SAST tools at a glance

| Tool | AI approach | False-positive handling | Auto-fix | Languages | Best for |

|---|---|---|---|---|---|

| Corgea | AI-native (LLM-driven detection) | <5% FP rate; AI triage | Yes - AI-generated fixes with validation | 20+ | Teams needing low noise + business-logic detection |

| Checkmarx | AI-assisted (query builder + remediation) | AI Query Builder reduces FPs | Yes - IDE auto-remediation | 35+ | Enterprises with complex governance needs |

| Snyk Code | Hybrid (symbolic + ML) | Low FP; developer feedback loop | Yes - Agent Fix with retesting | 19+ | Developer-first teams wanting IDE-native scanning |

| Semgrep | Rule-based + AI assistant | Noise filtering reduces FPs up to 98% | Yes - Assistant autofix in PRs | 40+ | Teams wanting open-source control + AI triage |

| Veracode | ML + RAG remediation | <1.1% FP rate (vendor-reported) | Yes - up to 5 patches per flaw | 100+ | Orgs prioritizing accuracy and compliance |

| GitHub Advanced Security | Semantic (CodeQL) + Copilot Autofix | Curated queries; can be noisy without tuning | Yes - Copilot-generated patches | 10+ | GitHub-native teams |

| Fortify (OpenText) | LLM + 20yr SAST history | Prediction model classifies true/false positives | Yes - contextualized code fixes | 35+ | Existing Fortify customers adding AI |

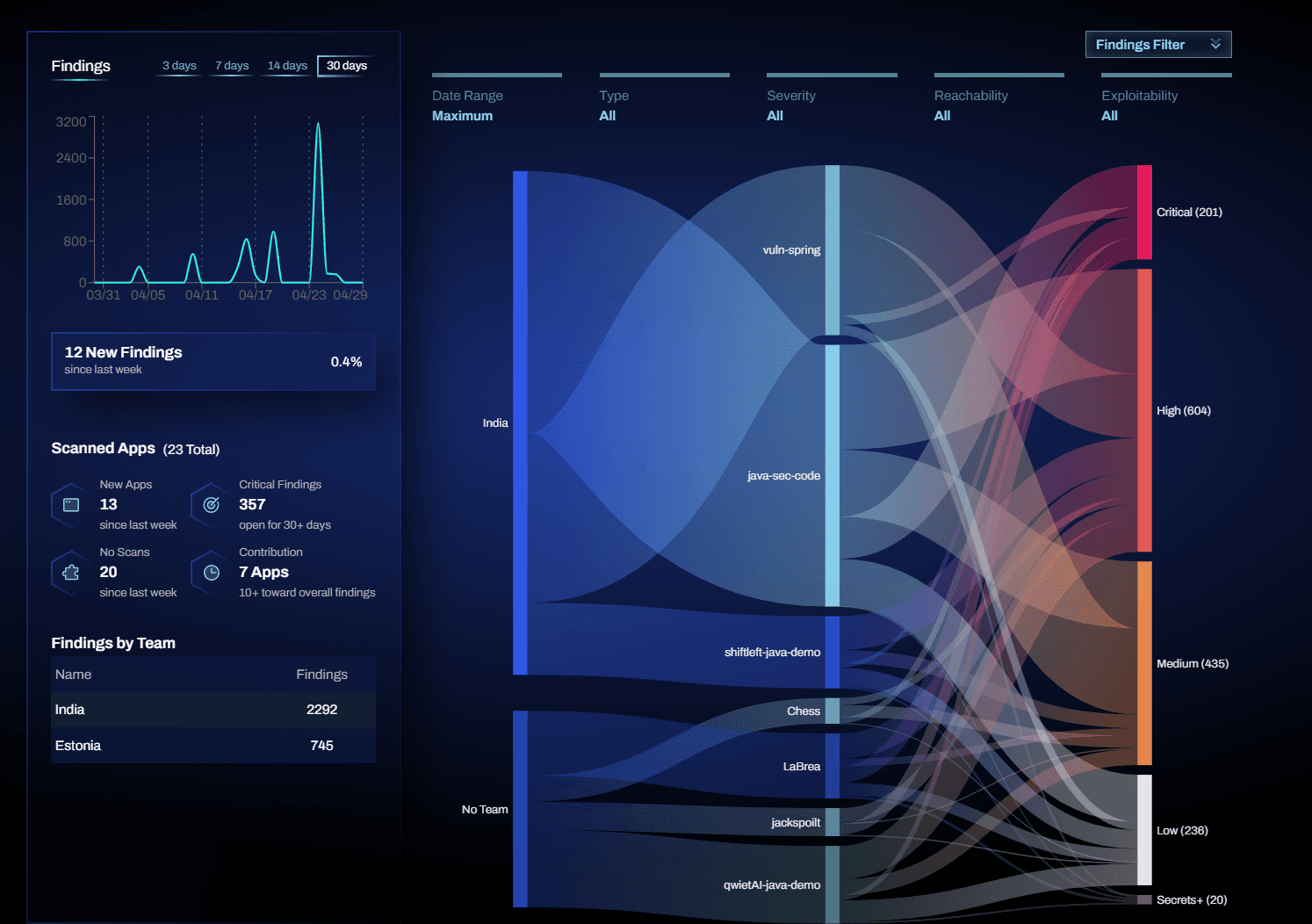

| Qwiet AI (Harness) | CPG + LLM remediation | Reachability-based filtering | Yes - AutoFix via LLM | 15+ | Teams optimizing for scan speed + triage reduction |

| SonarQube | Rule-based + AI CodeFix | Precision-focused rules; low noise in default config | Yes - AI CodeFix (cloud) | 30+ | Broad code-quality + security in one platform |

| Endor Labs | Context-aware analysis | Reachability + function-level analysis | Limited | 10+ | SCA-heavy teams adding SAST with deep reachability |

What is SAST?

Static Application Security Testing (SAST) finds security issues by analyzing source code before it runs. Instead of testing a live application, SAST scans code at rest - typically during development or in CI/CD pipelines - to catch vulnerabilities early, when they are cheaper and easier to fix. Common issues SAST looks for include injection flaws, insecure authentication logic, unsafe data handling, and misused APIs. Because SAST runs without executing the app, it fits naturally into “shift-left” security workflows.

Traditional SAST vs. AI-powered SAST

Traditional SAST relies on rules, patterns, and data-flow analysis. AI changes the equation in three ways:

- Detection: AI-native tools use LLMs and contextual reasoning to find business-logic flaws and authentication issues that pattern matching misses.

- Triage: AI groups, explains, and prioritizes findings so teams spend less time sorting noise.

- Remediation: AI generates fix suggestions - and in some platforms, validates them before they reach a pull request.

Most “AI-powered SAST” tools bolt AI onto a traditional engine for better triage and fix guidance. “AI-native SAST” tools use AI as part of the scanning process itself, analyzing code more like a security engineer would. Both approaches have trade-offs, and the right choice depends on where your team’s bottleneck is.

The 10 best SAST tools in 2026

1. Corgea

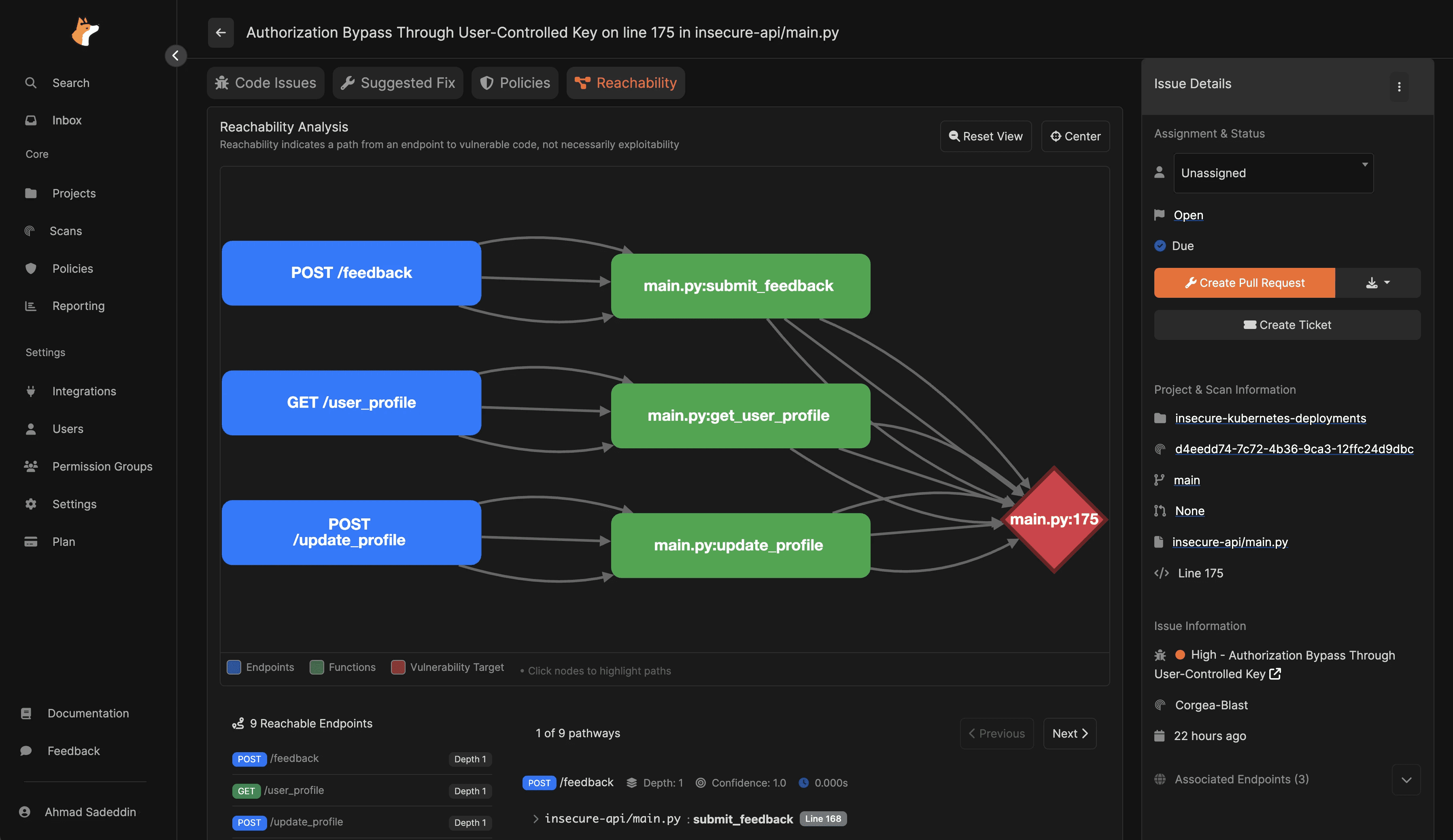

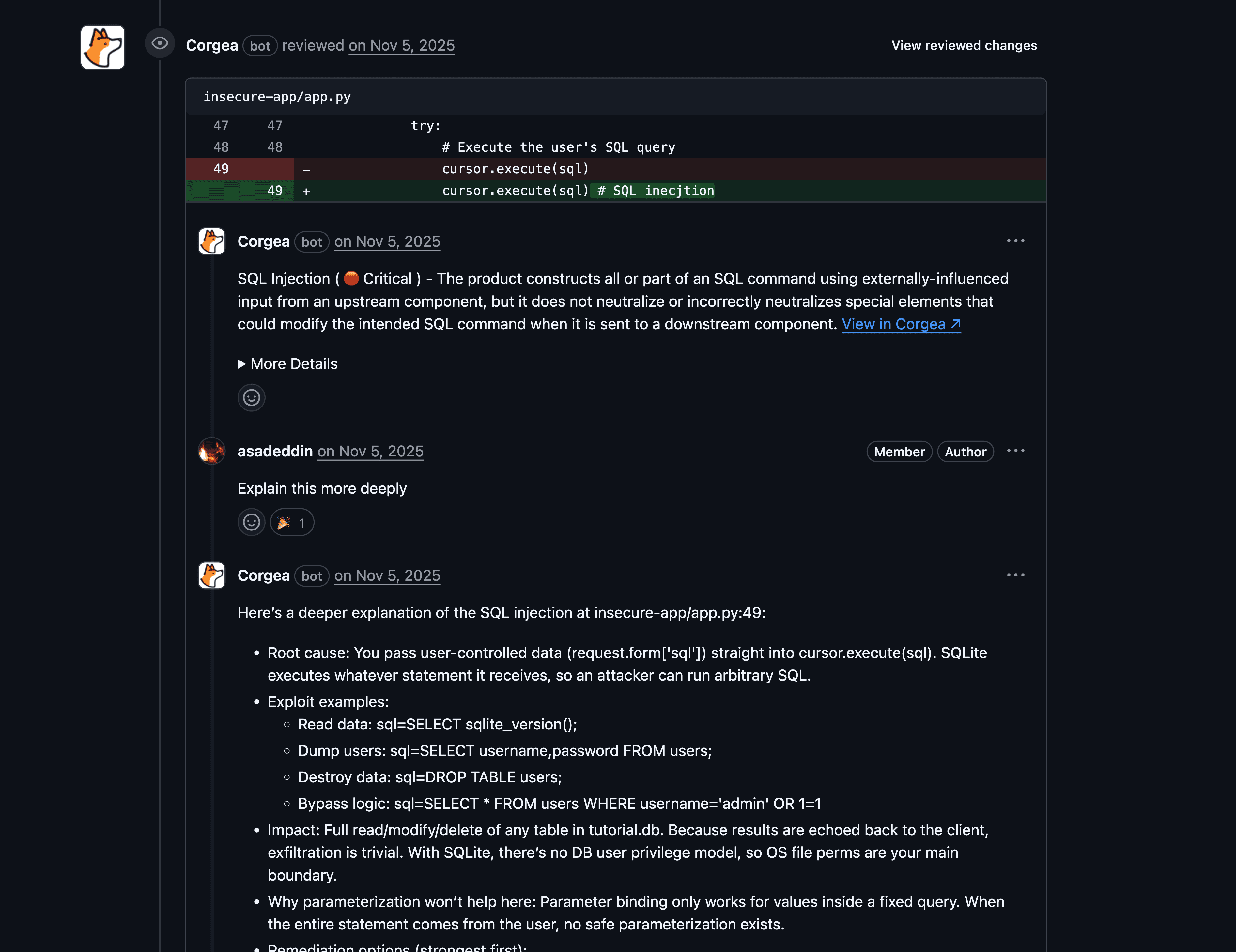

Corgea is an AI-native SAST platform that uses large language models as part of the core scanning engine, not just for post-scan triage.

How it uses AI

- Uses LLMs to understand code context and logic, aiming to reduce false positives compared to pattern-only detection.

- PolicyIQ lets teams provide business and environment context in natural language, which the scanner uses to improve detection accuracy and fix generation.

- Developer workflow integration (IDE + PR-level fix suggestions) keeps remediation in-flow.

Key strengths

- AI-native posture: AI is part of contextual reasoning during scanning, not only summarization of results.

- Policy-driven contextualization tailors results and fixes to how your business actually works.

- SAST reachability analysis resolves endpoints and creates a call graph to the vulnerable function to show if it is reachable.

- An independent Latio.tech report found Corgea was the best auto-fixing tool on the market.

Potential limitations

- As a newer entrant, independent benchmarking data beyond the Latio report is limited - run a proof-of-value on your own codebase.

- AI-native SAST can reduce false positives significantly, but no tool eliminates them entirely.

- If purchasing decisions depend on analyst quadrants, a more established vendor may be a safer internal sell.

Best for: Teams drowning in traditional SAST noise who want higher signal, business-logic detection, and AI-generated fix guidance.

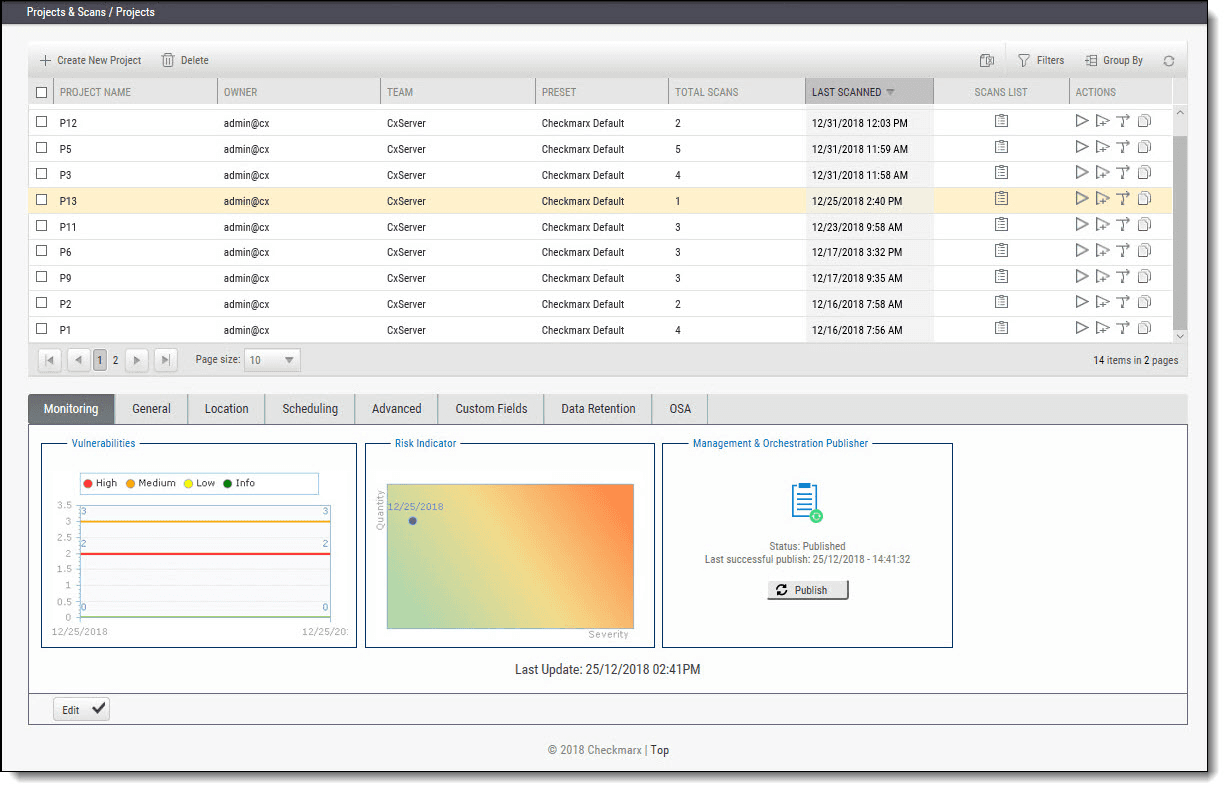

2. Checkmarx

Checkmarx is a long-established AppSec platform with enterprise SAST at its core. In 2026, it layers AI onto customization and remediation workflows rather than replacing its traditional detection engine.

How it uses AI

- AI Query Builder helps teams customize SAST detection logic using natural language so the scanner better understands organization-specific patterns.

- “AI remediation at scale” automates or accelerates fixes across SAST, SCA, secrets, and IaC findings.

- Developer Assist supports agentic AI remediation in the IDE via an MCP server, generating remediated code developers can accept or refine.

Key strengths

- Strong enterprise orientation: customization and governance are first-class capabilities.

- AI-assisted detection customization (query building) addresses the common “we can’t express our codebase patterns” problem.

- Broad category coverage beyond SAST (SCA, secrets, IaC) in a single platform.

Potential limitations

- The breadth and flexibility that make Checkmarx attractive for enterprises can feel heavy for smaller teams - expect more platform-level setup than “drop-in SAST.”

- Access and experience can vary by IDE and AI-assistant tier (Developer Assist docs differentiate limited vs. full access).

- Traditional detection engine may miss complex business-logic flaws that AI-native scanners catch.

Best for: Large organizations with mature AppSec programs, complex governance, and multiple scanning categories who want AI-accelerated remediation on top of a proven SAST backbone.

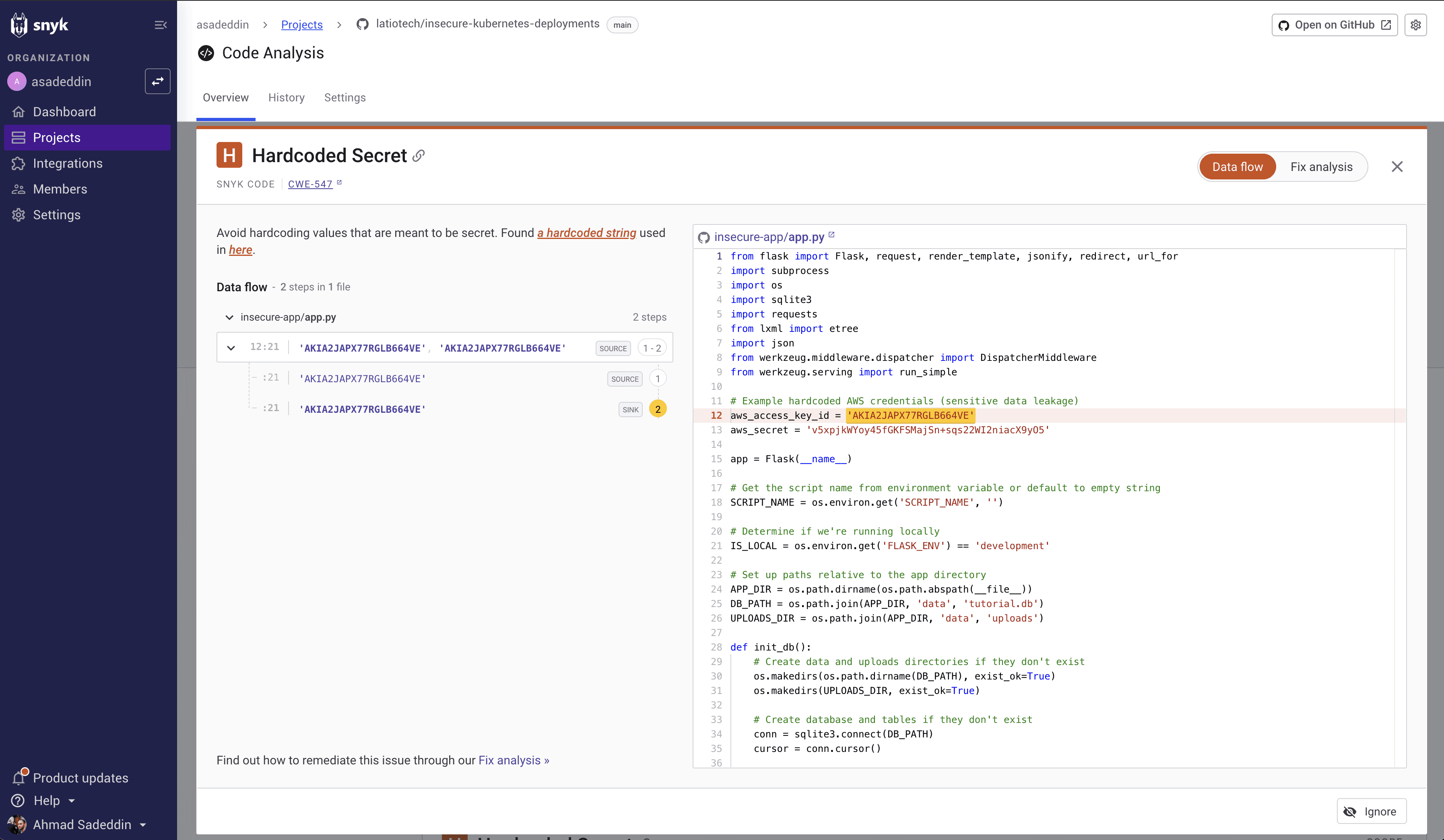

3. Snyk Code

Snyk Code is widely adopted as a developer-first SAST tool, grounded in DeepCode AI for detection and Agent Fix for auto-fix workflows.

How it uses AI

- DeepCode AI is built on 25M+ data-flow cases, 19+ languages, and multiple AI models to find, prioritize, and autofix vulnerabilities.

- Agent Fix produces up to five potential fixes and automatically retests each fix for quality using Snyk Code’s engine.

- Snyk emphasizes its auto-fix approach is not “LLM-only” - pre-screening and validation reduce risky hallucinations.

Key strengths

- Developer experience is a clear priority: real-time IDE feedback, fast scans, and actionable PR-level findings.

- Fix workflow includes auto-retesting and quality checks after generation - the kind of guardrail buyers should demand.

- Broad platform coverage (SAST, SCA, containers, IaC) under one roof.

Potential limitations

- AI-generated fix coverage varies by language, flaw type, and configuration - run a proof-of-value on your top vulnerability classes.

- Custom rule authoring uses Rego/DSL, which is more complex than natural-language policy definitions.

- The Latio.tech report noted that Snyk can only generate fixes during a rescan in IDE extensions, limiting practical auto-fix utility in some workflows.

Best for: Engineering teams that measure success by developer adoption and fast remediation, not just scanning coverage.

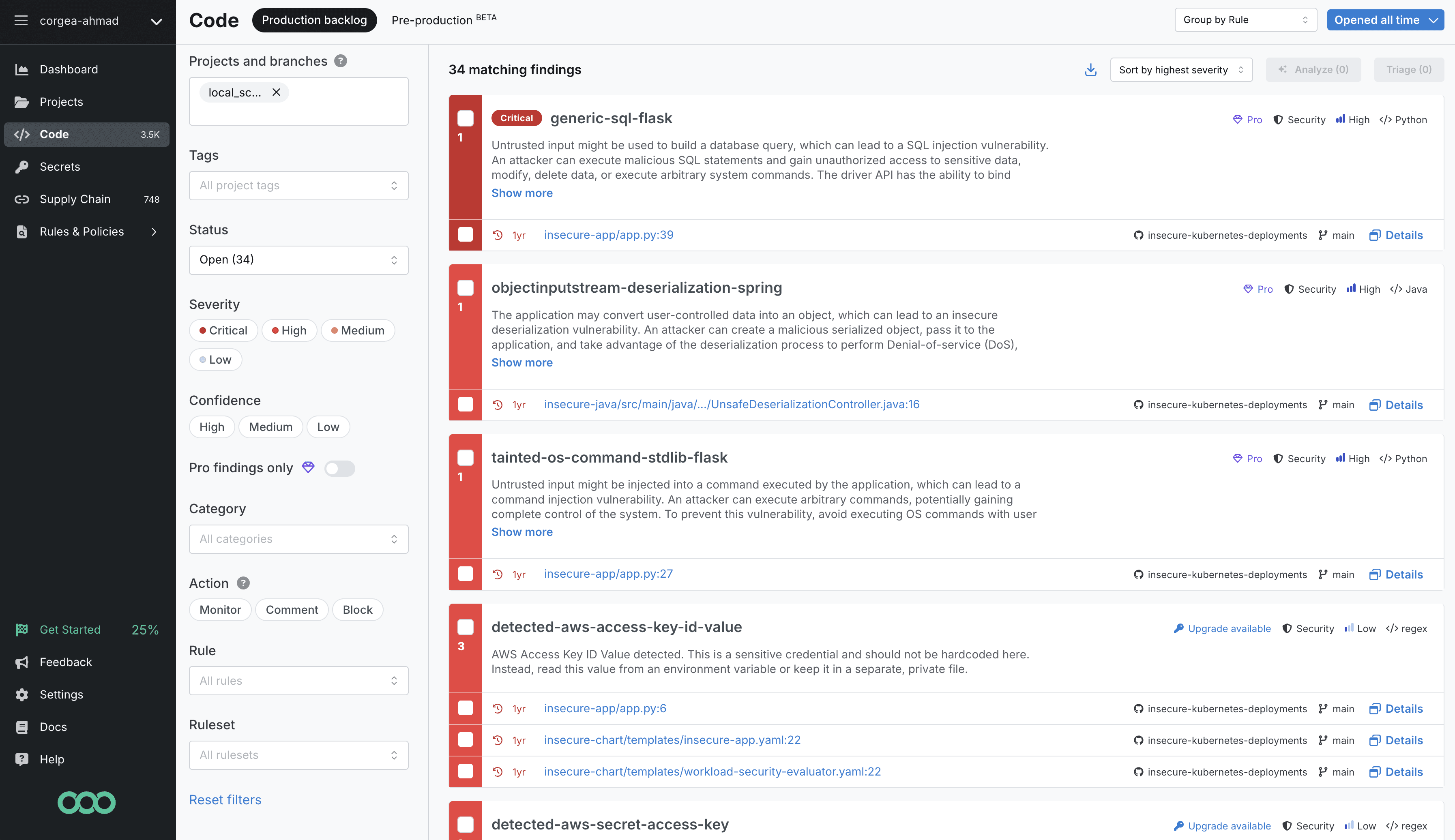

4. Semgrep

Semgrep is known for developer-friendly static analysis with a fast, pattern-matching core. In 2026, Semgrep Assistant adds AI-powered triage, noise filtering, and remediation guidance.

How it uses AI

- Semgrep Assistant combines static analysis with LLMs to filter noise, explain findings, and provide remediation guidance in PR workflows.

- Noise Filtering suppresses likely false positives by understanding mitigating context (Semgrep cites a “20% reduction the day you turn it on”).

- “Memories” and auto-triage reuse your past triage decisions to avoid repeating the same work.

Key strengths

- Open-source foundation with a strong community rule library and transparent documentation.

- Very fast scans (median CI scan time ~10 seconds) with minimal CI overhead.

- PR-centric workflow: explanations and suggested fixes appear where developers work.

- Independent testing (Doyensec) found Semgrep’s security-focused configuration produced zero false positives on OWASP benchmarks.

Potential limitations

- Some Assistant features are not available for custom or community rules.

- Noise filtering is explicitly labeled beta - important to know if you’re planning to operationalize at scale.

- Pattern-based detection has inherent limits for complex business-logic and cross-file data-flow issues.

Best for: AppSec engineers and developer-platform teams who want a fast, transparent, customizable SAST foundation augmented by AI for triage bottlenecks.

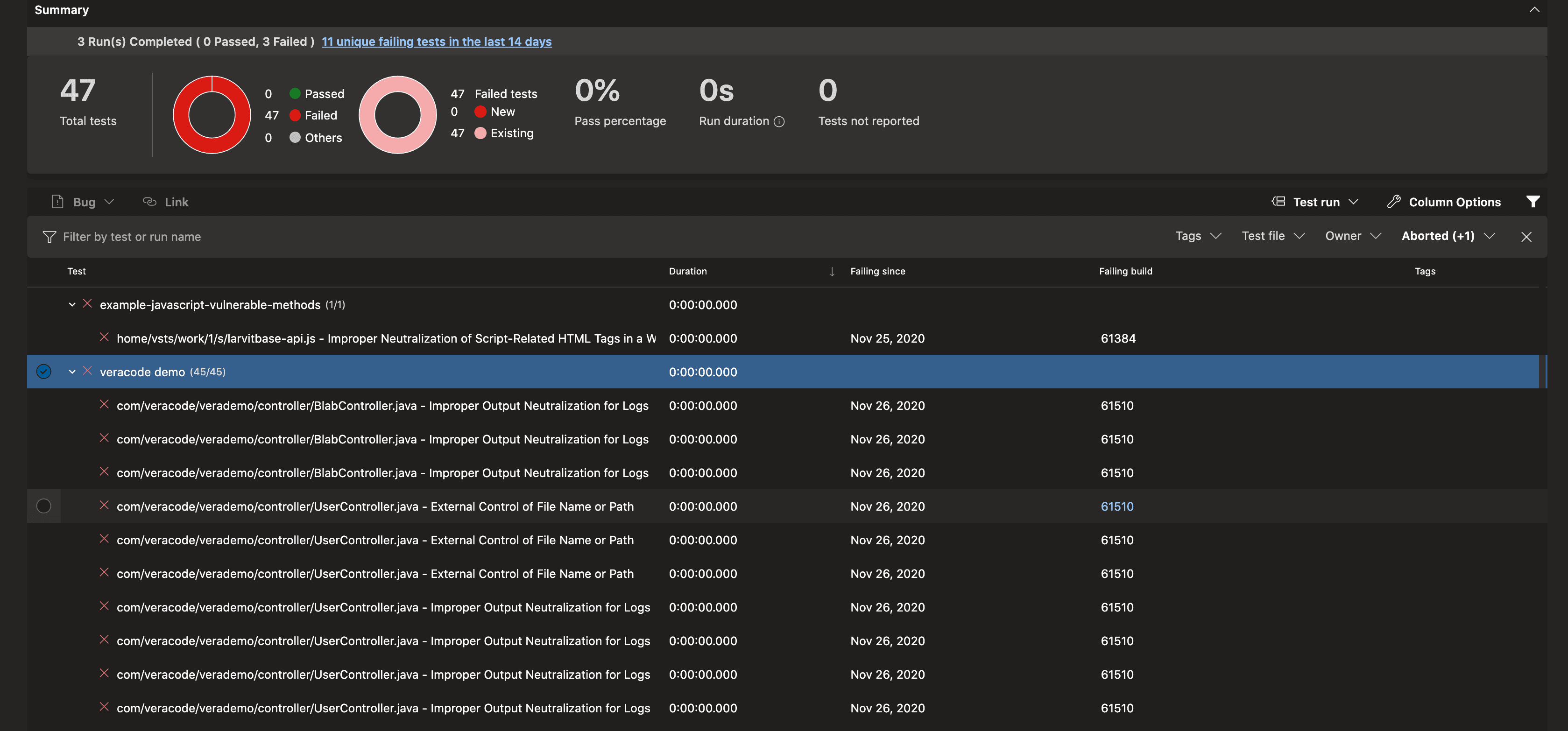

5. Veracode

Veracode is a major application security vendor with a well-established static analysis platform. Its 2026 AI story centers on Veracode Fix for AI-generated remediation.

How it uses AI

- Veracode Fix uses a machine-learning model plus retrieval-augmented generation (RAG) against Veracode’s remediation database to generate secure patches.

- Fix analyzes CWE ID, programming language, sink function, and surrounding code context, then returns up to five code patches per flaw.

- Veracode emphasizes “responsible design” - quality gates to reduce hallucination risk and no retention of customer code.

Key strengths

- Vendor-reported <1.1% false-positive rate, consistently cited in independent surveys (VDC Research 2025 platinum vendor recognition).

- Reachability analysis traces data flows to show whether tainted data can reach sensitive sinks.

- Broad language coverage (100+ languages and frameworks) and integration with 40+ DevOps tools.

Potential limitations

- Veracode Fix resolves findings from Pipeline Scan specifically - not all Veracode scanning modes.

- Like all AI remediation, patches still need engineering review to ensure correctness and compatibility.

- Can be expensive for small teams given the enterprise-focused pricing model.

Best for: Security leaders who prioritize accuracy and compliance, especially when burning down security debt across large application portfolios.

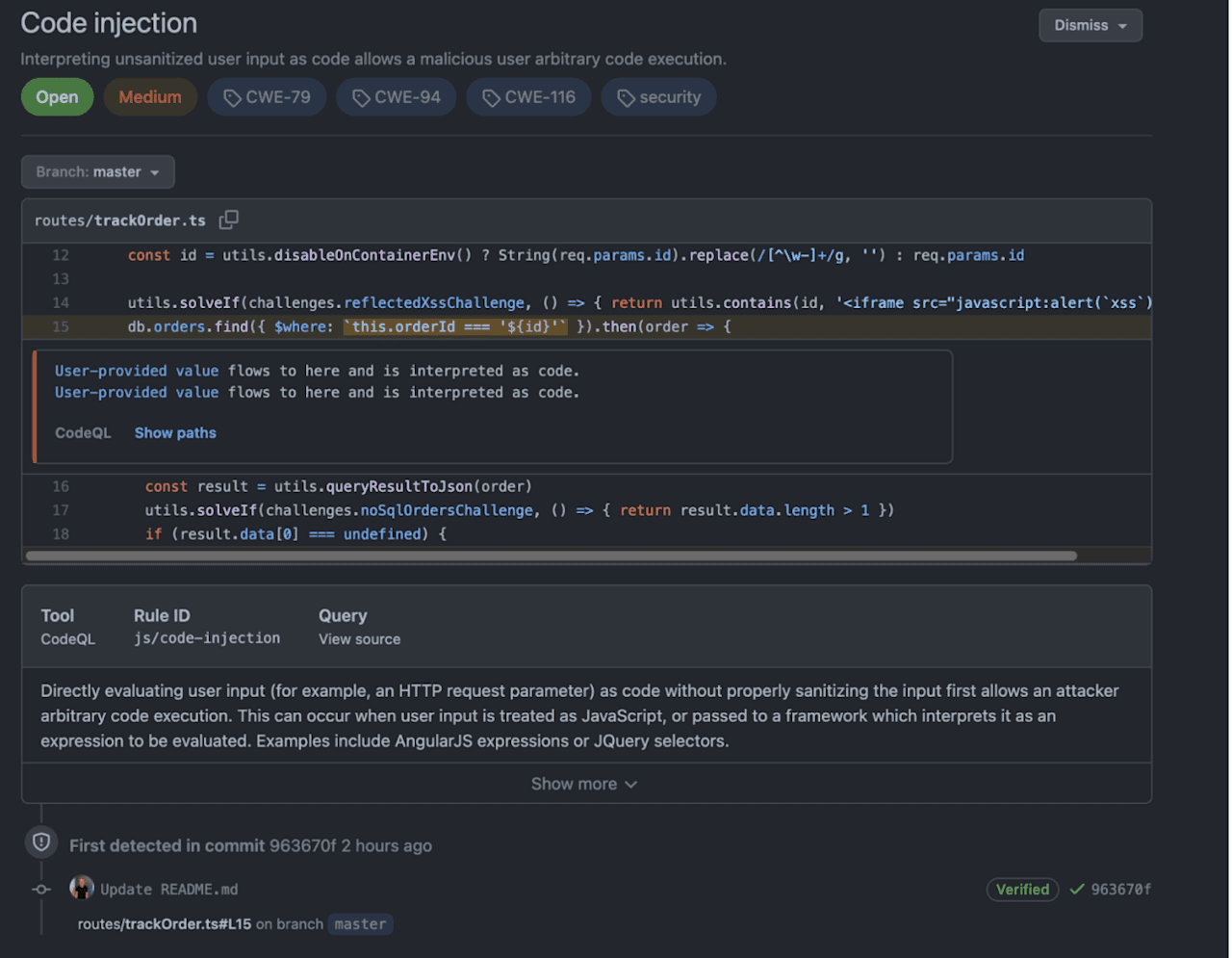

6. GitHub Advanced Security (CodeQL + Copilot Autofix)

GitHub’s native SAST offering uses CodeQL for semantic analysis and Copilot Autofix for AI-generated remediation, tightly integrated into the GitHub workflow.

How it uses AI

- CodeQL constructs a database of code and runs semantic queries to trace data flows across functions and modules.

- Copilot Autofix (powered by GPT-4.1) automatically suggests code fixes for 90% of alert types, with claims of 3x faster remediation.

- Secret scanning and push protection complement the SAST capabilities.

Key strengths

- Seamless GitHub integration: scanning, alerts, and fixes all live where developers already work.

- Thousands of curated CodeQL queries selected for high accuracy.

- Free for open-source projects; Copilot Autofix suggestions can often be committed with minimal edits.

Potential limitations

- Supports ~10 languages - significantly fewer than most competitors.

- Can produce many false positives and slow scan times without heavy query customization (noted in independent evaluations and Doyensec benchmarks).

- Custom queries use CodeQL’s own DSL with a steep learning curve.

- Requires a paid license (GHAS) for private repositories.

Best for: Teams already committed to GitHub that want native, zero-friction SAST without introducing another vendor.

7. Fortify (OpenText)

OpenText Fortify is one of the longest-running SAST platforms, and the addition of Fortify Aviator brings LLM-powered analysis and remediation to its two-decade foundation.

How it uses AI

- Fortify Aviator combines LLM-powered code analysis with Fortify’s historical SAST database to perform deep semantic scans.

- A prediction model classifies findings as true vulnerabilities or false positives and explains why, learning from past fixes and developer feedback.

- Provides contextualized code-fix suggestions (complete code blocks) directly in the developer environment.

Key strengths

- Deep institutional knowledge: 20 years of SAST rules and vulnerability data inform the AI layer.

- Flexible deployment: cloud, on-premises, or managed service.

- Supports 35+ languages and 1,600+ vulnerability types across SAST and DAST.

Potential limitations

- Aviator is currently available to Fortify on Demand customers - not all deployment models.

- No publicly disclosed false-positive rate; false positives are acknowledged as inevitable (mitigated via scan policies and AI classification).

- The platform can feel dated compared to developer-first tools built in the last five years.

Best for: Organizations already invested in Fortify that want to add AI capabilities without migrating to a new platform.

8. Qwiet AI (Harness)

Qwiet AI (acquired by Harness) positions itself around scan speed, reachability, and AI-generated fixes. Its technical approach centers on Code Property Graph (CPG) analysis plus LLM-driven remediation.

How it uses AI

- Uses a Code Property Graph that integrates data flow, control flow, and syntax tree analysis for deeper context and prioritization.

- AutoFix uses large language models to generate code fix suggestions with CPG-derived context; the LLM runs in Qwiet’s virtual private cloud with no customer data used for model training.

- Vendor-published bakeoff reports show scan time and false-positive deltas across real applications.

Key strengths

- Speed claims are unusually specific (including vendor-published comparative data).

- AutoFix implementation details are clearly documented, including deployment model and data-handling commitments.

- Candid about AI limitations and the need for developer review - a healthy posture for 2026.

Potential limitations

- AutoFix is not enabled by default and only generates suggestions for a limited subset of top findings.

- Quantitative claims are often based on vendor materials - validate with your own repos and workflows.

- Smaller language coverage than some competitors.

Best for: DevSecOps teams that measure success in time saved and triage reduction, and want the fastest possible scan-to-fix cycle.

9. SonarQube

SonarQube (by Sonar) is one of the most widely adopted code-quality and security analysis platforms, used by thousands of organizations for both quality gates and SAST. In 2026, Sonar has added AI CodeFix and expanded its security rule coverage.

How it uses AI

- AI CodeFix (available in SonarQube Cloud and recent Server editions) generates fix suggestions for security issues and code smells using LLMs.

- Detection still relies primarily on deterministic, precision-tuned rules - AI is used for remediation, not scanning.

- Clean as You Code methodology encourages fixing issues in new code rather than tackling legacy backlogs.

Key strengths

- Combines code quality and security in a single platform - teams get linting, code smells, bugs, and vulnerability detection in one scan.

- Very large rule library across 30+ languages, with rules mapped to OWASP, CWE, and SANS standards.

- Free Community Edition makes it accessible for small teams; self-hosted deployment gives full control over data.

- Mature CI/CD integrations and a well-understood quality-gate workflow.

Potential limitations

- Security detection is rule-based and pattern-driven - less effective at catching business-logic flaws or novel vulnerability classes.

- AI CodeFix is relatively new and limited to certain editions and languages.

- The platform’s strength is breadth (quality + security) rather than depth in security-specific areas like reachability analysis.

Best for: Teams that want a unified code-quality and security platform with broad language support, especially those already using SonarQube for quality gates.

10. Endor Labs

Endor Labs entered the SAST space from a software-composition-analysis (SCA) background, bringing deep dependency and reachability analysis to static security testing. Its differentiator is function-level reachability that connects first-party code vulnerabilities to actual execution paths.

How it uses AI

- Uses call-graph and reachability analysis to determine whether vulnerabilities in both first-party and third-party code are actually reachable in production.

- AI-assisted prioritization filters findings based on context: is the vulnerable function called? Is it exposed to untrusted input?

- Integrates SCA and SAST findings into a unified risk view, reducing the “two separate dashboards” problem.

Key strengths

- Function-level reachability across first-party and third-party code is unusually granular for a SAST tool.

- Unified SCA + SAST view helps teams prioritize across dependency and code-level risks together.

- Strong focus on reducing alert fatigue through context-aware filtering.

Potential limitations

- SAST capabilities are newer compared to the established SCA offering - depth of security-rule coverage may lag dedicated SAST vendors.

- Language and framework support for SAST is still expanding.

- Auto-fix capabilities are limited compared to tools that have invested heavily in AI remediation.

Best for: Organizations with heavy open-source usage that want reachability-aware SAST tightly integrated with dependency analysis.

How to choose the right SAST tool

The “right” SAST tool is the one that produces trusted signal and fits your engineers’ flow. That usually matters more than the longest feature list.

Name your bottleneck first

Most teams struggle with one of three things:

- Too much noise: You need fewer false positives and better triage. Look at tools with strong AI-based noise reduction (Corgea, Semgrep Assistant, Veracode).

- Slow remediation: You find issues fine, but fixing them takes too long. Prioritize tools with validated auto-fix workflows (Corgea, Snyk Agent Fix, Veracode Fix, Copilot Autofix).

- Blind spots: Your current scanner misses logic flaws, authentication issues, or cross-module data flows. Evaluate AI-native detection (Corgea) or semantic analysis (CodeQL, Qwiet CPG).

Run a realistic pilot

Measure workflow outcomes, not just vulnerability counts:

- Mean time to a clean triaged list - not just scan time.

- Reduction in duplicate or low-confidence findings - actual noise reduction.

- Fix acceptance rate and regression rate - do AI fixes help, and do they break things?

Demand safe remediation ergonomics

In 2026, AI-generated fixes should come with guardrails: quality gates, retesting, confidence thresholds, or review workflows. “Fast wrong fixes” are worse than slow correct ones. Ask vendors how they validate AI-generated patches before they reach developers.

Evaluate integration depth

The best SAST tool is one your developers will actually use. Check:

- Does it run in your IDE and CI/CD pipeline?

- Does it surface findings in pull requests where decisions happen?

- Does it integrate with your ticketing and reporting systems?

Consider total cost of ownership

Beyond license fees, factor in:

- Setup and rule-tuning time

- Ongoing maintenance and rule updates

- Developer time spent triaging false positives

- Training costs for custom DSLs vs. natural-language policy tools

Frequently asked questions

What is the difference between SAST and DAST?

SAST analyzes source code without executing it (white-box testing). DAST tests a running application from the outside (black-box testing). Most mature security programs use both - SAST to catch issues early in development, DAST to find runtime and configuration vulnerabilities.

Can SAST tools detect business-logic vulnerabilities?

Traditional pattern-based SAST tools generally cannot. AI-native tools that use LLMs to understand code context and intent (like Corgea) are designed to catch logic flaws such as broken authentication, insecure authorization, and missing access controls.

How many false positives should I expect from a SAST tool?

It varies widely. Vendor-reported rates range from <1.1% (Veracode) to <5% (Corgea) for purpose-built commercial tools. Untuned open-source scanners can produce significantly more. The key metric is how much developer time you spend triaging - tools with AI-powered noise filtering can dramatically reduce that burden regardless of raw false-positive counts.

Should I choose an AI-native or AI-assisted SAST tool?

AI-native tools (where AI is part of detection) tend to catch more nuanced issues but are newer and may have less benchmarking data. AI-assisted tools (where AI helps with triage and remediation on top of traditional scanning) are more proven but may miss what rules can’t express. Evaluate based on your specific gap - noise, blind spots, or remediation speed.